When AI companies make big claims about future capabilities, financial markets move and media amplifies. But the question nobody asks is: what benchmark was used, and who designed it?

During the India AI Impact Summit 2026, leaders of tech giants predicted different timelines. Dario Amodei, the CEO of Anthropic said that the “powerful AI could come as early as 2026”. Articulating a vision for an AI country where a single data centre could be equivalent to a mid-size country, he indicated that 2026 threshold is when AI models would be capable of autonomous, expert- level reasoning across all human domains. Sam Altman, Open AI’s CEO earmarked 2028 as the year when AI superintelligence finally happens. Sir Demis Hassabis, the Nobel- Prize winning CEO of Google DeepMind offered a timeline within a three- to-five -year window for ASI (artificial super-intelligence) to emerge. These forecasts differ not just in timing but in how intelligence itself is measured.

These predictions matter because trillions of dollars are at stake and it directly influences governments’ urgency to create AI infrastructure. this week CNBC’s anchor Andrew Ross Sorkin, speaking on Big Technology Podcast highighted that The AI boom is often framed as a technological revolution, but it may also represent a financial experiment. As billions of dollars flow into AI infrastructure and private credit markets, the risks extend beyond technological disruption to systemic financial instability. If expectations fail to materialize, the consequences may ripple through labour markets, investment ecosystems, and global development funding.

ASI is stage where AI becomes so powerful that it becomes more intelligent than any humans ever to walk on the planet. Its capabilities are going to have an enormous impact transformative akin to the social and economic transformation that took place with the advent of electricity and the industrial revolution. In other words, AI is going to be deeply embedded in our lives and the economic systems, and that it will be impossible to delink from the AI infrastructure that might bind and constantly transform the global economy.

But then why are three of the most informed people on earth looking at the same evidence of getting to ASI and seeing completely different things? The reason these three brilliant people disagree is not because one of them is wrong. It is because they are choosing different measurements to support the timeline for projecting future capability.

Also, the big AI giants are constantly exploring new capabilities which have a direct impact on capital inflows into the overall ecosystem. So benchmarking and new capabilities become a part of market signalling supported by good media amplification and strategic communications, and not just science. For example, MIT Technology Review reported this week that Open AI is throwing all its resources in creating a fully automated researcher.

Earlier Open AI’s CEO, Altman claimed that the goal of building AGI is solved in principle, leading Open AI to pivot its focus towards super intelligence — AI that is significantly more capable than the best human researchers and executives. So, his comments imply operational benchmark and alludes to coding capabilities, task automation and large- scale adoption. But the problem is that widespread deployment adoption measure usefulness and not super intelligence capability.

However, there is one benchmark that is being used to measure AI’s fluid intelligence: the Abstract and Reasoning Corpus for Artificial General Intelligence (ARC-AGI)., Created by François Chollet, an AI researcher at Google and creator of Keras, one of the most widely used AI development frameworks in the world, the benchmark is designed to test a model’s logical reasoning and skill acquisition abilities on unseen tasks or tasks that is easier for humans but difficult for AI such as solving logic- based puzzles.

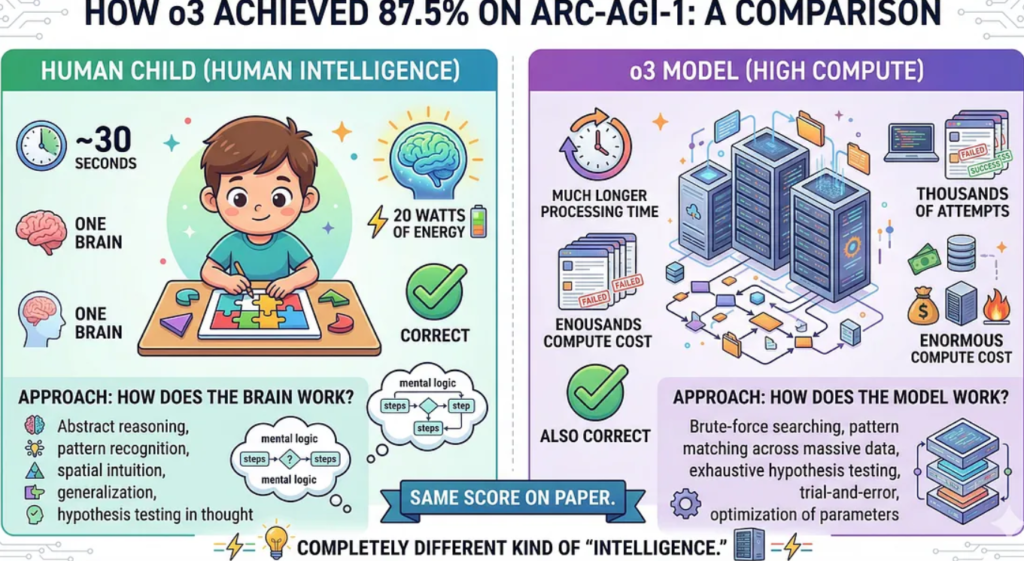

The earlier LLMs followed a path. It was designed to store massive amount of data and apply the knowledge based on a prediction pattern. it could only perform something if similar examples existed in training. But then in 2024, the o3 model which scored 87.5% on ARC- AGI — 1 test. The jump was a significant improvement from a year earlier. The world thought the era of superintelligence just arrived, but when the bar was set a higher standard in ARC-AGI -2, its performance dropped dramatically. The same model scored approximately 2.9% to 3.0% on the ARC-AGI-2 semi-private evaluation where human scored 60 percent. What changed.

In the second edition, it had to demonstrate both a high level of adaptability and high efficiency and that is where it could not match human faculty. So, humans had an edge. What becomes obvious is anyone who designs the test, controls the score. There was another catch behind the 87.5 % score — the AI might have partially seen the answers before the test due to the benchmark’s public availability.

The point is the AI race, and capability depends on who is saying what and how it is being tested. Every model generates a lot of excitements; tons are written about it. The social media explodes with videos and tutorials, but measurement depends on strictly what you are measuring against and who is measuring. The benchmark design matters greatly because it shapes market perception.

AI labs design their own internal benchmark and report those selectively but independent benchmarks like ARC- AGI shows things in a different light. What the AI labs claim is not wrong either. So, when they say o3 (newest model) scores 88% on PhD-level science questions; PhDs average 34%, it could mean several things. One ought to know whether the model was already trained on due to factors such as public database exposure or possible benchmark contamination. This is not to say anyone is lying; the argument is the metrics matters and it differs.

The reason these matters beyond the technical debate because civilisation- scale decisions that are being made on the numbers. The scale of financial and infrastructure commitment is enormous. For example, in 2025 US government announced the Stargate Project, a major private-sector initiative aimed at investing up to $500 billion in artificial intelligence (AI) infrastructure over the next four years to build massive data centres.

At the Stargate announcement, OpenAI CEO Sam Altman called it “the most important project of this era,” claiming it could lead to cures for cancer and heart disease, as well as enable the creation of AGI — a benchmark his and other companies are working fervently to hit. In January 2026 at the India AI Impact Summit, Indian companies announced investment of hundreds of millions to build world-class-data centres and even signed up bilateral deals with US based AI companies like Anthropic and Open AI.

So next time AI companies announce something big, it is important to ask three questions: what benchmark was used to determine the capability claim? Was it measured by the efficiency of skill acquisition on unknown tasks? What was the compute power and cost — what kind of chips were used? Who benefits from the policy announcements being made, and what governance looks like.

References

BlueDot AGI Strategy course: https://bluedot.org/courses/agi-strategy . The author recently completed this course and thanks the course instructor Filip Alimpic

Peuyo. T (2025). The Most Important Time in History Is Now. https://unchartedterritories.tomaspueyo.com/p/the-most-important-time-in-history-agi-asi?utm_source=bluedot-impact