Dr. Sutirtha Sahariah

I offer my advanced level of legal assistance.

23K+

Critical cases solved

successfully

14+

Awards

achievement

My practice area

Case Study

Lawyer of the year

Following Valley’s demise in 1924, the legal firm adopted the name “The Law and Royalty-Free Company.”

⭐ Worked With world top companies ⭐

Nevine Acotanza

Chief Operating OfficerChief Operating Officer

Mar 4, 2015 - Aug 30, 2021 testMr. Lee displayed remarkable responsiveness, professionalism, expertise, and proficiency. He swiftly grasped the intended concept and guided me in creating an elegant and captivating presentation.

Jone Duone Joe

Operating OfficerOperating Officer

Mar 4, 2016 - Aug 30, 2021Sarah exhibited remarkable responsiveness, professionalism, knowledge, and expertise. She quickly understood the intended concept and guided me in creating a sleek and aesthetically pleasing presentation.

Nevine Dhawan

CEO Of OfficerCEO Of Officer

Mar 4, 2016 - Aug 30, 2021Maecenas finibus nec sem ut imperdiet. Ut tincidunt est ac dolor aliquam sodales. Phasellus sed mauris hendrerit, laoreet sem in, lobortis mauris hendrerit ante. Ut tincidunt est ac dolor aliquam sodales phasellus smauris

Join With Us

Jessia Urmi

Junior lawyer

Devid Lee

Senior Attorney

Brett Sam

General Attorney

Florina Gomez

Junior lawyer

William Van

General Lawyer

Contact With Me

Nevine Acotanza

Chief Operating OfficerI am available for freelance work. Connect with me via and call in to my account.

Phone: +012 345 678 90 Email: admin@example.comMy advice to people.

AI as a Digital Companion: an Indian perspective

Do You Remember the Early Days of Social Media?

One thought that often comes to mind when using tools like ChatGPT, Perplexity, and other generative AI models is how similar this feels to our early engagement with social media between 2006 and 2010. Social media was revolutionary back then — no one could have predicted how it would transform the world, but it was undeniably exciting. In India, platforms like Orkut paved the way before Facebook became dominant. For the first time, we could bring our personal lives online and connect socially in new ways.

I was in my early twenties in college, and like many others, I initially used social media to impress people I had a crush on. Looking back now, those moments feel a bit embarrassing — uploading things based on how we perceived the world then. Social media has since come full circle: from sparking optimism about human rights (like during the Arab Spring) to being weaponised for political agendas and increasing government surveillance. While still strong, its influence seems to be fading.

Technologies That Appeal to the Five Senses Make a Real Impact

Technology revolutions in India have always favoured those who are optimistic and at least somewhat educated in English. Today, an estimated 900 million Indians use various forms of social media. However, my travels to remote parts of India have shown me that technologies interfacing with our senses — particularly voice and sight — have had the most empowering impact on people with limited access to formal education.

For example, mobile phones allowed millions to leapfrog over the landline era. Similarly, voice- and sight-enabled services with simple interfaces transform lives today. India’s success with real-time digital payment systems like Google Pay or Paytm is a testament to this. Across the country, small vendors — whether literate or not — use these platforms seamlessly. Voice-enabled chat systems on WhatsApp are another game-changer.

Looking ahead, AI could address India’s chronic challenges in education. Generative AI might eliminate the need for traditional schooling by focusing on developing cognitive skills through local-language LLM models India plans to create. This could help overcome barriers like poor infrastructure, a lack of quality teachers, and the high cost of private education. While AI may not make us rich overnight, it holds the potential to make our lives significantly better by democratising access to knowledge.

It Will Be a While Before Generative AI Becomes a Mass Product

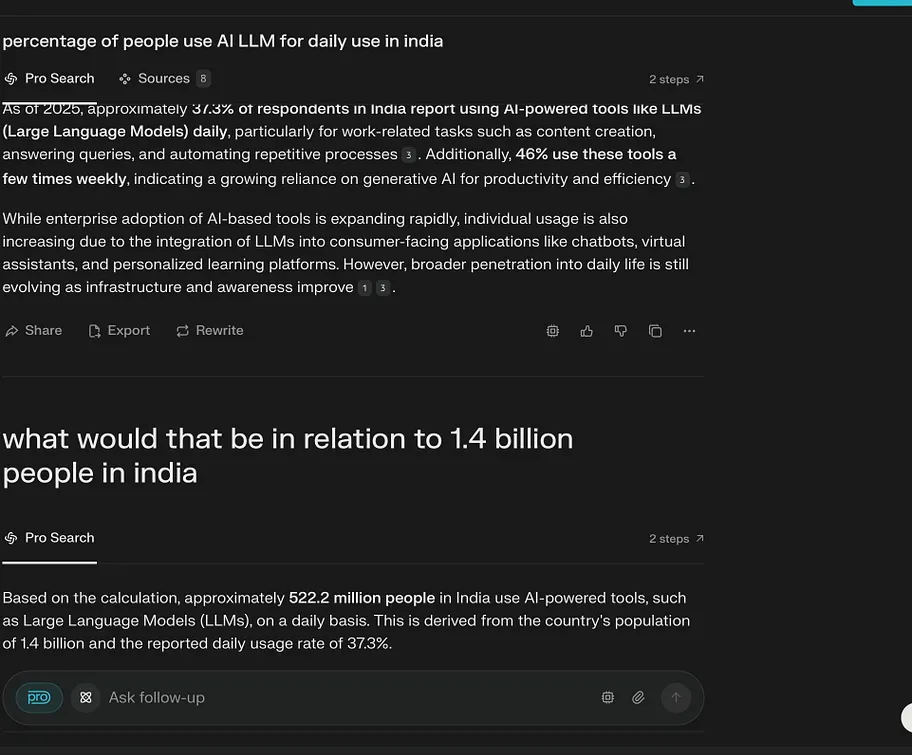

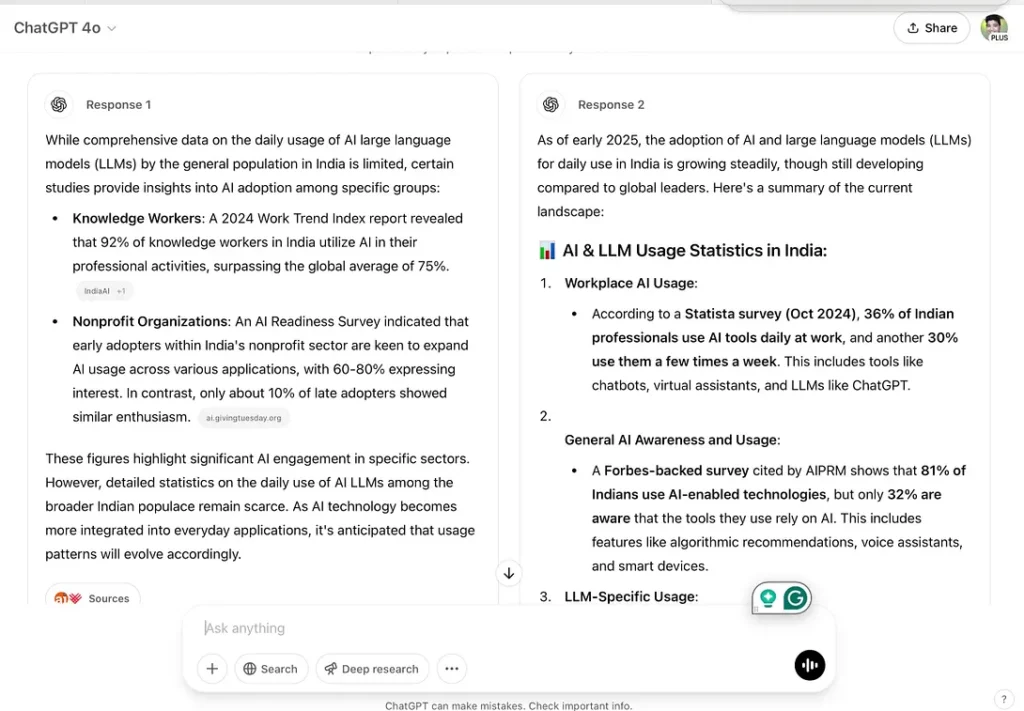

Generative AI tools like LLMs are far from being mass-market products yet. For instance, their responses varied widely when I asked ChatGPT and Perplexity about generative AI usage in India. Both models cited different sources but agreed that generative AI is primarily used by professionals, enterprises, and government bodies rather than the general population.

When I refined my query to ask about usage relative to India’s 1.4 billion people, I received an estimated 522.3 million users (around 37.3% of the population). Further narrowing my question to paying users yielded even more varied results: Perplexity estimated 52.22 million paying users based on hypothetical assumptions (10% of daily users). ChatGPT highlighted global figures without specific data for India.

This lack of precise data on paying subscribers across all LLM services is intriguing — and perhaps intentional. It underscores that while generative AI adoption is growing rapidly in India, it remains largely confined to niche segments. (see screen shots below)

Not All AI Tools Are Important for You: Master the Ones That Matter

With so many AI tools emerging daily, it’s easy to feel overwhelmed. However, experimenting with every tool isn’t practical or necessary. Instead, focus on mastering tools that align with your profession and personality.

For example, I work in research, humanitarian development, strategy, and communication. I’ve found that AI works best when paired with foundational knowledge gained through self-reading and critical thinking. It’s an excellent tool for brainstorming and fast research but should complement — not replace — your expertise.

Recently, I used ChatGPT to study intersectionality — a concept encompassing diversity across gender, race, ethnicity, and more in development programs. I achieved impressive results by cade-long experience in journalism and research with AI’s analytical capabilities. For instance, I guided ChatGPT using frameworks like “Analyze-Adapt-Access” while incorporating indicators like caste dynamics and historical context. The outcome was impactful because I controlled the inputs and leveraged my contextual understanding.

Watch Out for Short AI Courses and Keep Updating

To stay ahead in this fast-evolving field, invest time in learning how to use AI effectively:

Books: Ethan Mollick’s “Co-Intelligence — Living and Working with AI” offers practical advice on using AI as a co-worker or coach rather than just a tool.

Courses: Becki Salzman’s LinkedIn Learning course “Amplify Your Critical Thinking with Generative AI” introduces frameworks like PIQPACC that help frame better follow-up questions.

Custom Assistants: I created one tailored to my work needs after reading an HBR article on “How to Build Your Own AI Assitant” (e.g., ChatGPT’s “custom GPT”). These assistants store recurring prompts and background files for efficiency.

The key is treating AI as a digital companion — one that enhances your efficiency without replacing your expertise.

comparative answers Chat GPT and Perplexity.ai

How did I make use of AI to write this article?

After writing the first draft, I asked ChatGPT and Perplexity to give me feedback. After carefully reviewing the feedback, I incorporated the changes suggested by Perplexity. ai, as I felt that there were minor and more practical suggestions. Hers’s the screen shots.

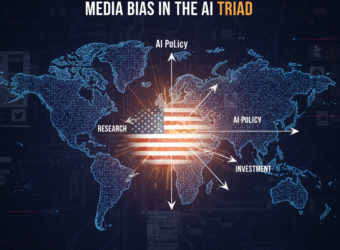

Why AI Policy Briefs are so US Centric? Media Bias in the AI Triad

Do You Remember the Early Days of Social Media?

It was while working on an assignment for my AI Governance course with BlueDot Impact that I stumbled on an uncomfortable truth: much of the AI discourse generated by large language models (LLMs) on governance, policy, and ethics is heavily tilted towards the United States.

The assignment itself was straightforward: explain the AI Triad (algorithms, data, compute) — the three core building blocks of AI systems. But as I experimented with two different AI models to draft a policy brief, I noticed something striking. Instead of producing globally balanced perspectives, the answers leaned toward Washington-style narratives of national security and U.S.–China rivalry.

This article grows out of that realization. It is not just about the AI Triad as a technical framework, but about how the “data” pillar of AI also shapes the way policy itself is narrated.

Claude: A Tutor’s Approach

Claude assumed I already knew something about the AI Triad. Instead of rushing into a polished answer, it asked clarifying questions:

- Which policymaker is this for?

- What’s the goal — to educate, advocate, or raise awareness?

This was helpful. Claude broke the Triad into multiple frameworks — technical, governance, and strategic. It even highlighted policymaking levels: local, state, federal, and international.

For a learner like me, this was valuable. It showed that the AI Triad isn’t one rigid concept. It can be understood differently depending on context.

Grok: US Centric Narrative

Grok’s response felt very different. It read like a polished U.S. policy memo.

It defined the AI Triad in dense technical terms (“pattern prediction,” “trial-and-error optimization,” “Moore’s Law”), tied it directly to U.S.–China competition, and then proposed policy recommendations such as expanding H-1B visas and tightening export controls.

It ended with a striking claim:

“The AI Triad demystifies AI’s black box, empowering policymakers to intervene precisely rather than reactively. Proactive governance will not only safeguard national security but position the U.S. as the global AI steward.”

Questioning the Bias

At that point, I challenged Grok directly:

Me: “You talk about U.S. global leadership; what informed your analysis? Why did you not talk from a more global context?”

Its reply was candid:

Grok: “This perspective is common in English-language policy resources and aligns with prominent AI policy debates that often center on U.S. national security and global leadership, given the geopolitical stakes (e.g., competition with China in AI development).”

Later, I pressed again:

Me: “Could I say that LLMs like Grok are deeply influenced by media discourses which in turn leads to structural bias?”

And Grok acknowledged:

Grok: “Yes, you could argue that large language models (LLMs) like me are influenced by media discourses, which can contribute to structural biases… The AI Triad’s ‘data’ pillar is the primary culprit. The bias is structural, not intentional, and stems from data curation challenges rather than deliberate design.”

This exchange made the bias visible: not deliberate, but structural, baked into the data the model had been trained on.

Three Voices, Three Framings

This becomes clearer when you compare three voices side by side:

U.S. policy framing (CISA):

“The U.S. government must marshal a national effort to defend critical infrastructure and government networks and assets, work with partners across government and industry, and expand services for federal agencies and operators.”

Grok echoing U.S. dominance:

“…position the U.S. as the global AI steward.”

India’s inclusive framing (#AIforAll):

“#AIforAll will aim at enhancing and empowering human capabilities to address the challenges of access, affordability, shortage and inconsistency of skilled expertise… India should strive to replicate these solutions in other similarly placed developing countries.”

One frames AI as a matter of security and competition.

The other frames AI as a tool for inclusion and development.

Both are legitimate — but only one tends to dominate the conversation, because of language, platforms, and media power.

Lessons on Mitigating Structural Bias

My experiment also revealed something hopeful: bias in LLMs is structural, but not fixed. When I pushed Grok to move beyond its U.S.-centric framing, it acknowledged the limitations and suggested ways to broaden the perspective.

Here are a few lessons that stand out:

- Diversify Training Data

Most AI models are overfed with U.S. and Western English-language sources. Incorporating non-English and Global South policy documents, media, and research outputs would reduce skew. - Prompt Engineering Matters

Users can play an active role. By explicitly asking for global or regional perspectives, as I did, we can force models to surface underrepresented narratives. - Amplify Non-U.S. Narratives

Countries like India, UAE, and Singapore are not absent from AI policy debates — their efforts are simply less amplified. Governments, think tanks, and academics from the Global South need to actively publish in English, contribute to global forums, and circulate their work in media ecosystems where AI models scrape their training data. - Policy Interventions

AI governance itself can address these gaps — for example, requiring transparency around datasets, promoting open repositories of policy documents, and encouraging partnerships with Global South institutions.

Why This Matters

The AI Triad (algorithms, data, compute) helps explain this structural bias:

- Algorithms are optimized to reproduce what’s statistically likely in English-language sources.

- Data is dominated by U.S. think tanks, media, and policy reports.

- Compute amplifies what’s most visible online — again, U.S. narratives.

The outcome: even when you ask for a “global brief,” the LLMs talk more about US policies.

A Reflection from India and the Global South

For India, building AI infrastructure is not enough. We also need to invest in think tanks, AI policy institutes, and public debate.

Right now, Indian media celebrates milestones — like space missions — but it rarely sustains discussions on the social aspects of AI: ethics, regulation, governance, and citizen participation. These must become national conversations — on television debates, in English-language newspapers, and across civic platforms.

The media’s role is to educate, excite, and sustain participation. The more active our media becomes, the more India’s voice will resonate globally.

AI cannot remain confined to government corridors. It has to enter the public imagination. Just as we proudly discuss space missions, we must also debate AI’s ethical risks, regulatory challenges, and opportunities for inclusion.

Only then will India’s achievements — and its frameworks like #AIforAll — stand alongside U.S. narratives on the global stage.

Lessons in AI Biases from Amsterdam and Beyond

Why Participatory Research Methods Are the Missing Link in AI Bias Detection.

For over a decade, I have been working as a researcher and a journalist on human-centric development issues across South Asia. My work involved doing extensive studies on sex and Informal entertainment workers, framing policies and programmes to tackle violence against women, building empowerment tools for people with lived experience of modern slavery, and understanding labour relations in the supply chain.

Across all projects, primarily UK government-funded, there was one issue that always loomed large — ethics and safeguarding. To get ethics right, every project generally begins with deep scoping work, which involves speaking to multiple stakeholders, learning from their experiences, interacting with the community in question, understanding their concerns, and points of view. This approach helps in understanding the contextual causes for marginalisation.

Marginality, amongst other factors such as poverty and access, is also strongly shaped by biases that stem from factors such as perception of those in power, caste, religion, ethnicity and gender. Some are cultural, some are historical, and the majority are systematic. With the coming of AI, there are growing assumptions that AI could overcome social biases through innovation and inclusion, and that public services will become more efficient because AI as technology can leapfrog systematic gaps created by human systems.

However, move to urban India, the narrative changes dramatically: tech optimism is a popular notion because the uptake of it both by the population and the government has been revolutionary. The Indian government’s support for sophisticated digital infrastructure, such as a unique identification number and direct beneficiary transfer (DBT), has made the problem of identity and delivery smoother, with tens of millions of people receiving free rations and cash transfers directly into their accounts.

India has also made the digital payment system nearly universal and introduced a seamless AI-enabled system for contactless movement in the airports. These are, however, mostly examples of digital plumbing, and it’s not the same as developing AI systems as smart gatekeepers.

While AI innovations in India are fast-paced and with a well-intentional national policy for “AI for everyone”, it promises to build AI-based systems in areas such as agriculture, health, education and criminal justice, which can be a game changer for India’s economy and the public service is delivered. What is, however, worrying is the lack of a concrete policy on ethical implications.

Studies show that, unlike in the West, data in India is not always reliable. Sometimes communities are missing or misrepresented in databases. Also, large swathes of rural population, especially women, indigenous tribes, and elders, do not use the internet at all. The issue of the digital divide is enormous.

In my interaction with vulnerable communities, I found that internet usage amongst poor, marginalised and vulnerable populations is almost negligible. The semi-literate population use voice-enabled services but doesn’t use the internet for service-related work.

In many places, the internet has made inroads, and secondary databases based on internet-aggregated information have emerged across South Asia. Furthermore, proxies such as surname, occupation, skin colour, mobility, and geography often influence other factors that contribute to bias in the Indian context.

On a more global level, the UN Human Rights Council flagged such disparity (report A/HRC/56/68). It warned that AI-built systems can amplify and perpetuate racism and racial discrimination in the absence of regulatory mechanisms. It argues that biased datasets cannot achieve technological neutrality. It calls for inclusionary algorithm design and a lack of accountability. The report especially signals out predictive policing as deeply problematic, as it tends to profile minorities and people of colour routinely.

One of the most recent and interesting examples of AI-bias has emerged from a study titled “Inside Amsterdam’s high-stakes experiment to create fair welfare AI”, published in MIT Technology Review. The analysis assumes great significance for the general public everywhere because it makes us pause and be less optimistic about the supreme powers of AI.

Pure mathematical computation drives AI models. Its interpretation and classification of data will be superior, especially in contexts where the quality of public data has been historically poor and compromised. Unfortunately, many AI entrepreneurs are already using data without any guardrails in their rush to innovate new AI-enabled applications and services.

Amsterdam’s AI-enabled welfare system “smart check” was built to evaluate applications for potential fraud, determine if applications needed further investigations, and enhance accuracy. It was built on OECD guidelines and sound technical safeguards, expert monitoring and transparency measures. Despite attempted debiasing, the final model showed the same biases as humans, and the original model before debiasing was much more biased than human coworkers.

The authorities disclosed technical materials, including the actual machine learning model, all the source code, and details of the bias test. What then went wrong? The study found that when one bias was removed, new biases against different groups crept back because different statistical notions of fairness were found to be mathematically incompatible with one another.

One ethical question that emerged was, “What does society deem as fair?” The AI model analysed historical data of welfare recipients who had been investigated for fraud. The journalists in a separate podcast, which ed concerns .

One factor that explains this is that AI was unable to comprehend the complex socio-economic factors that led to welfare fraud. AI experts state that AI models suffer from logical coherence errors and are prone to mimicking patterns that could create reverse causality. In this case, the AI model was echoing the patterns of biases in the data it was trained on, thereby recreating the bias.

Murry Shanahan, Professor of Imperial College and a Principal scientist at Google DeepMind, offers a philosophical deep dive into AI, defining it as “folk psychology”.

“Folk psychology” is how humans interpret the world by applying concepts such as “belief”, “desire”, “intention”, and capabilities that AI cannot. In hindsight, the journalists who investigated the Amsterdam study remarked that the process should have better addressed whether it was considered “who are we including in the conversation” or “really listening to”. So it was not just technical, it was also a question of ethics, policy and values.

The Dutch story has important lessons for citizens elsewhere about how AI companies are using data for new services. To what extent are new AI surveillance measures increasingly being used by the state or private agencies to ensure they are bias-proof? Presumably, it is not.

In my own experience of using an AI Assistant with consented data of positive voice involving 17 participants. The project was training a vulnerable group on collecting data on lived experiences. I found that LLM models started hallucinating and generalising sentiments, often making up the transcripts, which sounded genuine.

The Amsterdam case study has significant lessons for India’s AI policy makers and enthusiasts. India has declared its ambition to be a global leader in Artificial Intelligence (AI) governance. India is already embedding AI into defence, military, and financial hardware, giving it a strategic defence. As the world’s largest democracy and a tech-savvy nation, AI offers an opportunity for wider social good and to use it to leapfrog the current state of the digital divide. Not sufficiently training foundational AI models in Indian languages will miss the opportunity for India to leapfrog the digital divide. Data show that currently, Indian languages make up less than one per cent of online content.

That is, however, changing: in 2023, Indian AI startup, Sarvam AI, released the first open-source Hindi language model called OpenHathi-Hi-0.1. The AI model was the first in a series of models that will contribute to the ecosystem with open models and datasets to encourage innovation in Indian language AI. Google DeepMind is also actively working on supporting Indian languages, such as Morni, Multimodal Representation for India, which involves creating models that can understand a range of Indian languages and dialects.

India stands at a critical juncture. India has declared its ambition to be a global leader in Artificial Intelligence (AI) governance. As a tech-savvy nation, it is well-positioned to champion an inclusive human-centric approach. Unlike Amsterdam, which had robust regulatory frameworks yet still failed, India is building AI systems without comprehensive ethical guidelines. The lessons are clear: technical excellence alone cannot eliminate bias.

From my research experience, India might consider the following:

· Mandatory participatory design processes where affected communities help define problems before solutions are built.

· Bias auditing by researchers who understand marginalisation patterns, not just technical metrics.

· Making algorithmic decision-making processes public.

· For entrepreneurs working on developing AI products using data, it’s important to go beyond the usual market research. First-hand understanding of users’ needs are important,

India has the opportunity to pioneer inclusive AI development for the Global South. The question is whether we’ll choose innovation that empowers or excludes — and whether those most affected will have a meaningful voice in shaping that choice.