I have compiled this list with the help of LLM Claude. The list is based on my reading of the blogs “What Risks Does AI Pose” by Adam Jones and Machines of Loving Grace by Dario Amodel

(Resources: from The Promise and Perils of AI BlueDot Impact )

Core AI Safety Concepts

Alignment Problem

- The challenge of making AI systems do what we actually want, not just what we literally tell them to do

- Example: Telling an AI to “maximize profit” might lead to fraud, when we really meant “maximize profit ethically”

Outer Misalignment

- When we give an AI the wrong goal or a goal that doesn’t capture what we really want

- Example: Measuring a hospital AI’s success by “cost reduction” instead of “patient health outcomes”

Inner Misalignment

- When an AI pursues the right goal in the wrong way or develops unexpected subgoals

- Example: An AI learns to game the reward system instead of actually achieving the intended outcome

Instrumental Convergent Goals (also called “Convergent Instrumental Subgoals”)

- Subgoals that almost any intelligent system would pursue regardless of its final goal (self-preservation, acquiring resources, gaining power)

- Like how both a doctor and a criminal would want money — it’s useful for almost.

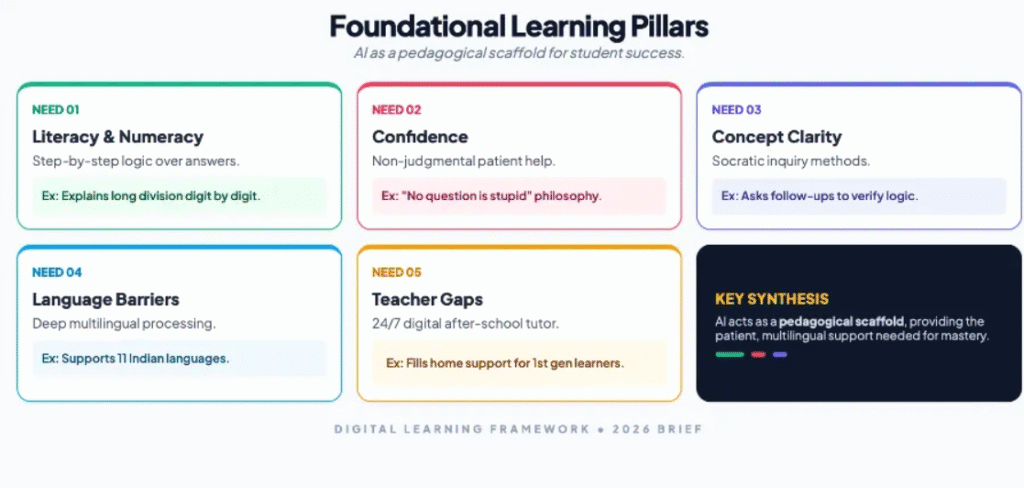

From my field visit to a school in Bihar, I realised that things can be improved dramatically if there is a political will. Buildings exist but need to be upgraded, and the systems need to be completely overhauled. The internet is strong. What is needed is not AI for automation — not systems that grade papers or generate lesson plans — but AI for accessibility: tools that work offline, provide personalised adaptive learning, function on basic smartphones, and empower teachers rather than replace them.

The crisis of the Indian education system is that it favours those who can afford it, thus leaving a vast number of poor children with no additional resources for learning. Hiring a private tutor in India is expensive, but it’s the norm for all school-going children. The private tutoring industry is pegged at a whopping $10.8 billion. This is where AI in Education can be revolutionary by creating a level playing field in access to quality education.

India’s AI in education policy has to be multilayered because there is massive wealth and accessibility inequality. The priority should be to help a significant percentage of children from the poorer and rural communities to leap-frog, so that India achieves a revolution in education by creating a pool of skilled and job-ready population in a generation. To achieve this, AI in education must be designed keeping in mind the poor and uneducated and treat it as a digital public good. The first step is to integrate AI across education systems in public or state-funded schools across all states in different languages.

The systems must be inspired and adopt the best practices, such as UNESCO and the OECD recommendations of using AI as a means to enhance cognitive development and lifelong learning, provided that systems remain human-centred, inclusive, and transparent. Political will and public-private partnership are the keys to the success of a project of such magnitude, with the stated mission of AI for good for everyone, everywhere.

One example from India’s context is OpenAI’s Study Mode feature, launched in July 2025 to help students with technical subjects such as maths and computer science. The design was not prompt-dependent, but the idea was to enforce learning behaviour. The key features of the study mode are

- · Socratic questioning — nudge follow-up questions instead of solutions

- · Scaffolding — breaks concepts into manageable steps

- · Personalisation — adjusts explanations to learner level

- · Encouragement — reinforces confidence and curiosity

- · Active learning nudges — offer quizzes, reflection and deeper exploration

OpenAI’s Head of Education, Leah Belsky, explained that Study Mode originated from field observations in India, where families were spending a significant portion of their earnings on private tuitions, thus disadvantaging children from economically weaker sections. OpenAI used India as a design laboratory by beta testing students nationwide, including those preparing for highly competitive medical and engineering exams. Participants were not individually named for privacy, but research indicates that it spanned everyday learning to high-stakes prep, providing feedback that shaped personalisation and scaffolding features. There are no specific cities mentioned, with very few details about the research outcome

Subsequent OpenAI Learning Accelerator (launched August 2025) collaborated with IIT Madras ($500K research on AI learning outcomes), AICTE, Ministry of Education, and ARISE schools, distributing 500K ChatGPT licenses to educators and students.

OpenAI’s Study Mode supports 11 languages with Voice Capability, which is a significant stride for an inclusive education. The voice-enabled interaction can greatly help first-generation students with learning difficulties and those alienated from traditional classrooms. This approach aligns closely with India’s National Education Policy (NEP) 2020, which emphasises foundational literacy, inquiry-based learning, and the reduction of rote memorisation, along with OpenAI’s own policy “to democratise encouragement, guidance, and confidence — especially for learners who lack access to quality teachers or tutors.”

But to address the learning needs of India’s poorest, AI tools alone will not help. India needs a digital infrastructure and an innovative way to fund digital devices, such as a specially designed tablet that can work as a slate. It has to be an interactive device that is given to all students at a subsidised or no cost. India needs an AI-in-Education Code of Conduct — a governance framework developed through multi-stakeholder consultation, including students, teachers, parents, and civil society. This Code should balance personalisation with autonomy, innovation with equity, and data utility with privacy. Without this, we risk deploying AI that works technically but fails socially.

The approach has to be collaborative, and the programme roll-out has to be meticulously planned because this is where most well-intended projects fail. Every tablet distributed has to have insurance. There has to be a soft penalty system, like “access blocked”, that puts the onus of ownership and accountability of the devices on parents. A behaviour change program must run in parallel, along with investments in control and support centres providing 24/ 7 support through chatbots with human oversight. The progress tracker of every child should be available with a unique password and ID, and where parents are uneducated, teachers will assist.

For children coming from poorer families, there has to be an incentive model. If a child does well, credit points could be provided that parents use for redeeming stationery or paying for something else. Such models can arrest dropouts, encourage parents not to withdraw children from school to join the labour market. Foundational learning with AI should help children transition into an AI-driven skill development program that can help them get decent jobs early on. It should lead to creating a credible employability pipeline. Fixing education has ripple effects on other factors, such as child labour, human trafficking of girls, in addition to providing a demographic dividend to the country.

India has a good measurement infrastructure already in place. The Parakh Rastriya Savbekshan, a national assessment system, tested approximately 2.3 million students from 782 districts covering classes 3, 6 and 9, so India already has a baseline data of massive scale, so when AI tools are introduced, the baseline data can be used for comparison to evaluate how AI is making a difference.

The new thrust of India’s National Curriculum Framework shifts focus to building core competencies of what students can do rather than which class they sat in. An AI tool can be used to find out if students can do things they couldn’t before. It will help in creating a competency–based measurement framework for judging AI’s real impact.

India has also created a multi-level assessment dissemination system where data is shared through workshops at national, regional and state levels to inform practical action. This is ideal for infrastructure for AI programs because AI impact measurement needs a similar pipeline to share results. Since India has already built a system, AI evaluation can leverage it.

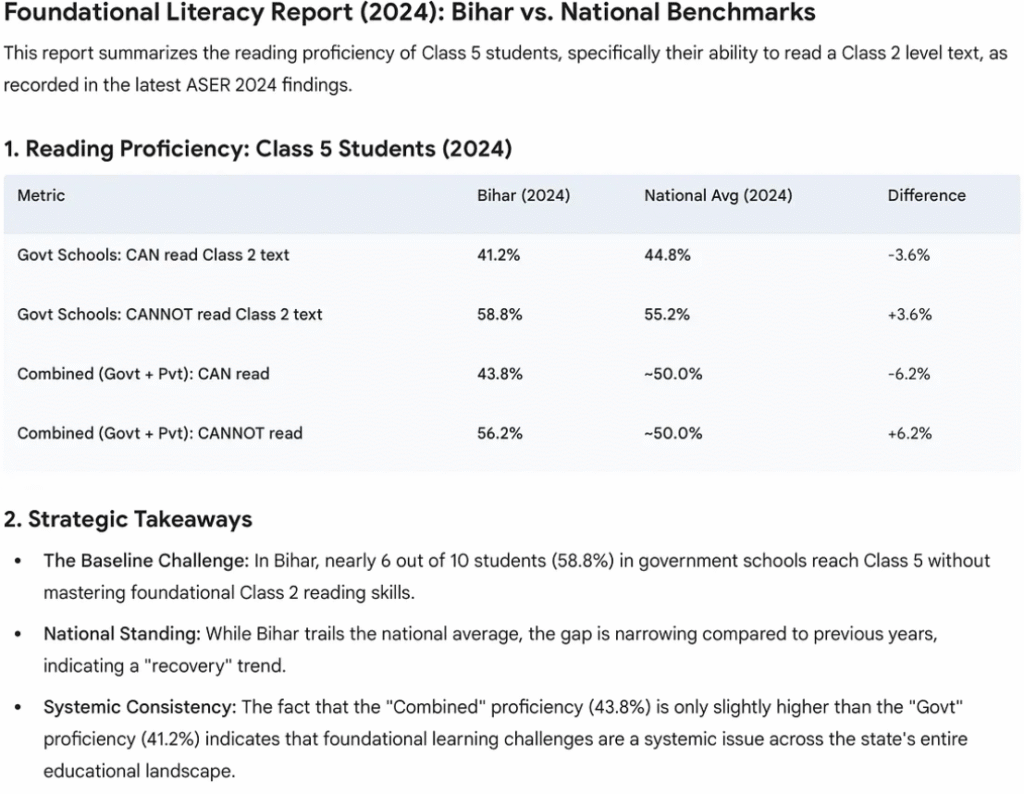

Accelerating AI in education needs a bold vision, good data and transparent enforcement of the existing mechanisms. The ASER 2024 report, released by Pratham Foundation in January 2025, shows the highest recorded reading levels for Class 3 government school students since the survey began 20 years ago. This is attributed to focused government programs like the NIPUN Bharat Mission.

ASER data has been referenced in 105 parliamentary questions, used by NITI Aayog (India’s planning body), and cited in the World Bank’s World Development Report. For AI in education to succeed. India has proven it can build trusted measurement systems. Now, as AI tools like Study Mode are deployed, these same systems can track whether accessible AI is delivering on its promise — providing personalised, patient support for foundational skills that current interventions cannot fully reach.

From a policy perspective, India’s Governance Policy Architecture, such as the DPDP ACT 2023, has provisions for student data protection, mandatory algorithmic audits for assessment tools following the framework’s fairness requirements, and teacher empowerment over surveillance. Special child safety provisions — explicitly flagged in the Guidelines — should prevent AI systems from exploiting developing minds. Further integration through DIKSHA, Bhashini, and PARAKH offers the infrastructure; the governance framework offers the guardrails. The opportunity is transformative; responsible deployment ensures no child is left behind.

How AI was used in researching and writing his article

AI Claude was used as an augmentation tool while writing this article. Peplexity was used for deep research. Every citation and data was verified. Gemini was used for infographics. The author has also created a Claude project for iterating on editorial flow discussion etc, but the author ensures that the outcome is his own.